Learning Real-world Visuo-motor Policies from Simulation

This is my Ph.D. project in the Australian Centre for Robotic Vision at QUT, with supervisions from Prof. Peter Corke, Dr. Jürgen Leitner, Prof. Michael Milford and Dr. Ben Upcroft.

Learning Planar Reaching in Simulation

Robotic Planar Reaching in the Real World

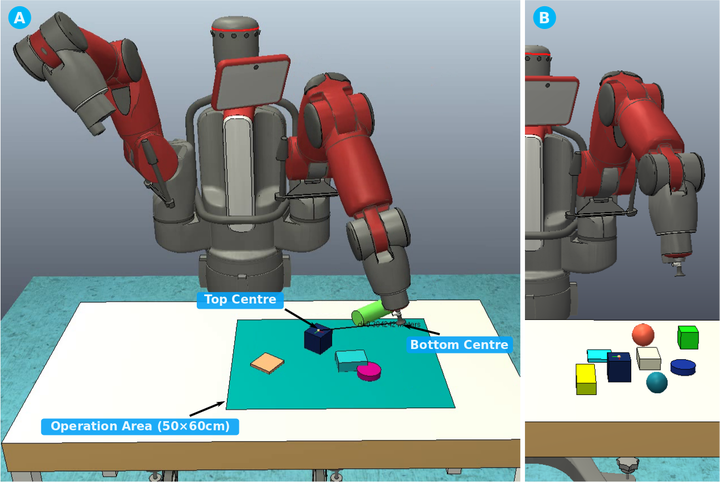

Learning Table-top Object Reaching with a 7 DoF Robotic Arm from Simulation

Contributions:

- Feasibility analysis on learning vision-based robotic planar reaching using DQNs in simulation.

- Proposed a modular deep Q network architecture for fast and low-cost transfer of visuo-motor policies from simulation to the real world.

- Proposed an end-to-end fine-tuning method using weighted losses to improve hand-eye coordination.

- Proposed a kinematics-based guided policy search method (K-GPS) to speed up Q learning for robotic applications where kinematic models are known.

- Demonstrated in robotic reaching tasks on a real Baxter robot in velocity and position control modes, e.g., table-top object reaching in clutter and planar reaching.

- More investigations are undergoing for semi-supervised and unsupervised transfer from simulation to the real world using adversarial discriminative approaches.